AI Agent Post-Mortems Blame the Model. The Credentials Did It.

Real AI agent incidents share one root cause teams keep missing: credential architecture. Here's what those post-mortems get wrong every time.

Practical security insights and product updates from the team building safer, simpler key management for modern APIs.

Real AI agent incidents share one root cause teams keep missing: credential architecture. Here's what those post-mortems get wrong every time.

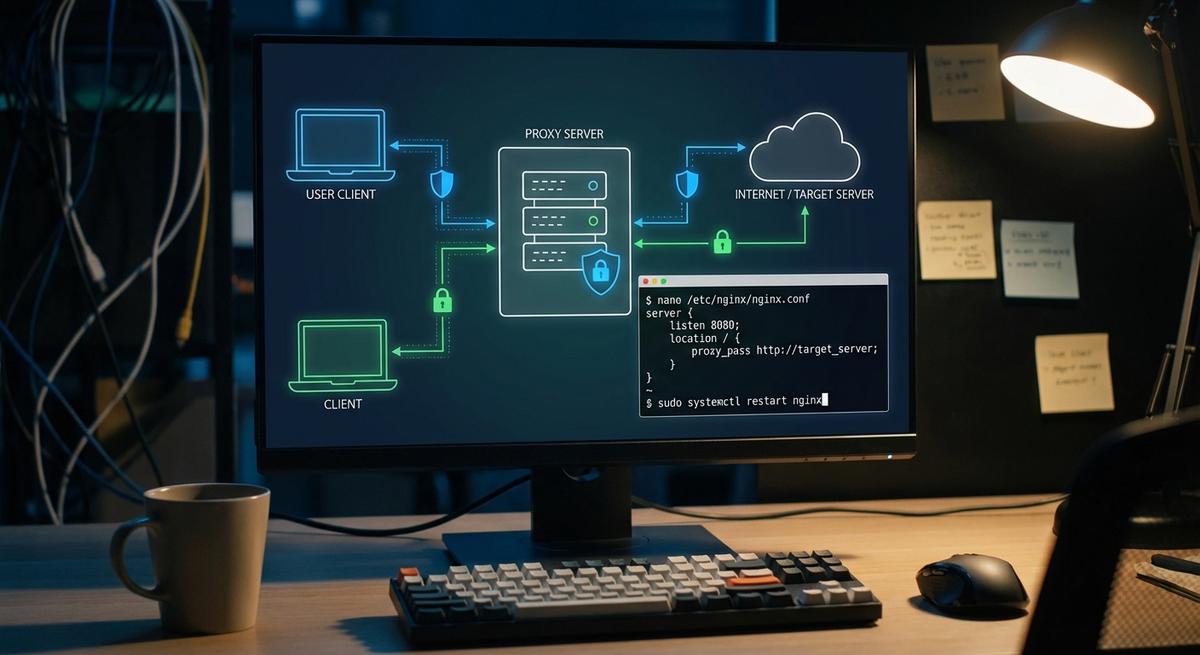

Giving your AI agent a real API key is the vulnerability, not the config. Phantom tokens let agents call real APIs without ever touching actual credentials. Here's the architecture that changes your blast radius.

Prompt injection, replay, scope escalation, enumeration, confused deputy. Four passed. One revealed a real gap. Here's the breakdown.

Every sandbox tool assumes the agent already has your real API keys. That's the problem, not the solution.

When AI agents go wrong, everyone blames the model. They're wrong. A forensic look at real incidents shows the same credential architecture failure, every time.

We run AI agents in production that handle Telegram, GitHub, Reddit, and HN integrations. Here's exactly how we use API Stronghold to keep credentials out of agent context.

MCPGuard secures MCP traffic. API Stronghold secures the credentials inside it. Here's the difference, when you need each, and why most teams need both.

When multiple AI agents share the same credentials, one compromised agent exposes everything. Here's how to give each agent its own isolated session with the exact keys it needs.

Run API Stronghold as a credential proxy for OpenClaw so agents never hold real API keys. Fake keys in, real credentials injected at the proxy, nothing reaches the LLM context window.

Stop hardcoding API keys into MCP servers. Here's the register→scope→issue→validate→expire pattern with real endpoints platform engineers can deploy today.